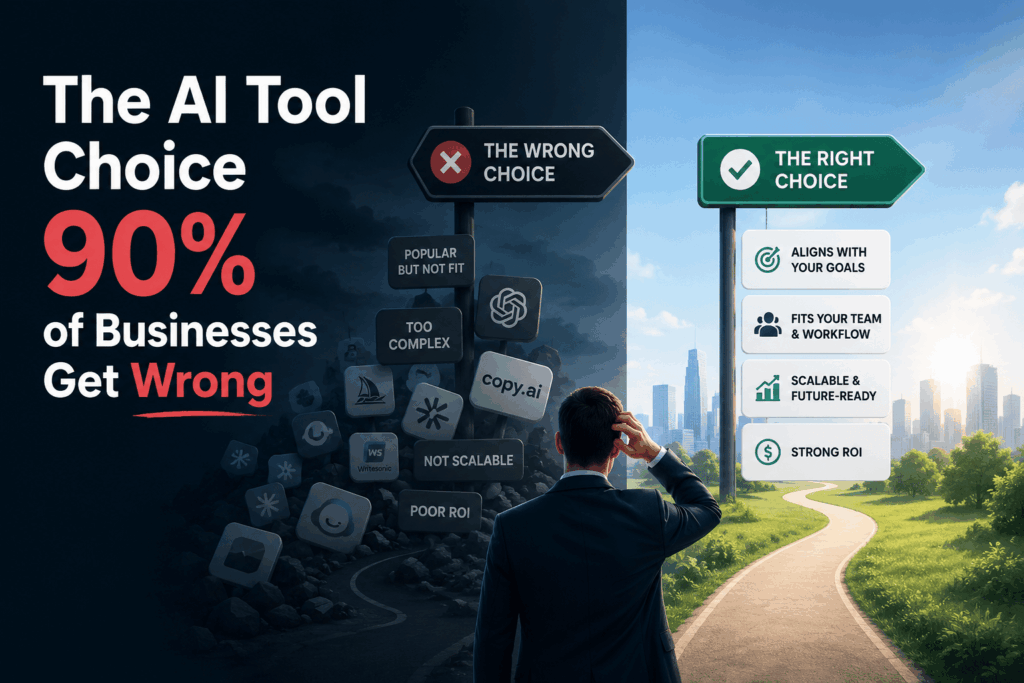

The AI Tool Choice 90% of Businesses Get Wrong

Stop chasing every new AI tool and start solving actual problems in your business. Map your real bottlenecks first, then find the technology that fixes them, not the other way around.

Every week brings another AI tool promising to transform your operations. Your inbox fills with demos and your competitors announce their newest tech. How do you choose the right AI tools for your business instead of just following trends? Start by ignoring what everyone else is doing.

How Do You Choose the Right AI Tools by Mapping Real Pain Points?

Most companies start with the tool and invent a problem later. This approach wastes money and frustrates teams who get stuck with software they don’t need. You need to flip this process completely around.

Write down the three biggest bottlenecks in your operations right now. Be specific about where time vanishes or where errors cost you money. Don’t write “improve customer service.” Write “reduce average email response time from six hours to one hour.”

One retail company spent months evaluating chatbots because competitors had them. They eventually realized their real problem was inventory forecasting. They bought demand prediction software instead and cut overstock by thirty percent. The chatbot would have been useless for their actual challenge.

Your pain points reveal what you actually need. Generic problems lead to generic solutions. Specific problems point you toward specific tools worth considering.

How Do You Choose the Right AI Tools Through Honest Resource Assessment?

Every AI tool comes with hidden costs beyond the monthly subscription. You need technical infrastructure. You need people who can manage the system. You need clean data to feed it. Most businesses skip this assessment and regret it.

A design agency bought advanced image generation software with exciting capabilities. Their team spent three months just organizing files to make the tool functional. They had excellent software but terrible readiness. The project stalled completely.

Start by auditing your current technical capacity without exaggeration. Can your team handle API integrations? Do you have someone to troubleshoot when things break? Is your data already structured or scattered across twelve different spreadsheets?

If your infrastructure is limited, you need tools with simple setup. If your team lacks technical skills, you need interfaces anyone can learn quickly. Powerful features mean nothing if implementation exceeds your actual capabilities.

Be brutally honest about time resources too. Some tools need daily supervision. Others run independently once configured properly. Match the maintenance requirements to your available attention span.

Testing Prototypes Before Making Long Commitments

Free trials are worthless if you don’t structure them properly. Most companies click around for a week and make decisions based on surface impressions. You need a real test with measurable outcomes.

Pick one specific task the tool should accomplish during your trial. Set a clear success metric before you start testing. If you’re evaluating writing software, don’t just “try it out.” Draft ten customer emails and measure time saved compared to your current method.

A consulting firm tested three project management tools simultaneously. They assigned identical small projects to different teams using different software. After two weeks, they had concrete data about which system actually improved workflow. One tool looked impressive but slowed everything down. Another seemed basic but cut coordination time in half.

Wrong.

Test with real work, not hypothetical scenarios. Use actual data from your business. Involve the people who will use the tool daily. Their feedback matters more than executive opinions about slick interfaces.

Document what breaks during testing. Every tool has weaknesses. You need to know if those weaknesses disrupt your critical operations. A minor bug that affects features you’ll never use is fine. A glitch in core functionality is a dealbreaker.

How Do You Choose the Right AI Tools by Calculating True ROI?

Return on investment for AI tools goes far beyond simple cost savings. You need to account for implementation time, training expenses, and opportunity costs. Many tools take six months to deliver any value at all.

One manufacturing company bought quality control software for twenty thousand annually. Implementation required forty hours from their senior engineer. Training took another thirty hours across the team. The tool saved two hours weekly once fully operational. They needed two years just to break even on time investment.

Calculate how long until the tool pays for itself in saved hours. Multiply your team’s hourly rate by implementation time and add it to subscription costs. Then measure the actual time savings the tool delivers per week or month.

Some tools improve quality instead of saving time directly. Fewer errors mean fewer customer complaints and less rework. This value is harder to measure but just as real. Track error rates before and during your trial period.

Watch out for tools that shift work rather than eliminate it. An AI scheduler that still requires manual oversight hasn’t actually automated anything. You’ve just changed which tasks consume your time. True ROI comes from work that simply disappears.

How Do You Choose the Right AI Tools When Evaluating Vendor Stability?

The AI landscape changes faster than almost any other technology sector. Companies appear, get acquired, or shut down with minimal warning. You need vendors who will still exist in three years.

Check how long the company has been operating and whether they’re profitable. Venture funding can disappear quickly in changing markets. A bootstrapped company with steady revenue is often more reliable than a hyped startup burning through capital.

Read the vendor’s update history and development pace. Companies that ship improvements regularly are more likely to maintain their product. Long gaps between updates suggest problems behind the scenes.

A marketing agency built their entire content workflow around an AI tool. Eighteen months later, the vendor suddenly announced they were shutting down. The agency spent two months rebuilding processes from scratch. They should have checked the vendor’s financial health before committing so deeply.

Look at customer reviews from at least a year ago. Recent reviews tell you about current features. Old reviews show how the company treats customers over time. Do they honor commitments? Do they maintain backwards compatibility when updating?

Building Internal Expertise Instead of Vendor Dependence

The best AI implementations come from understanding how the tools actually work. You don’t need a computer science degree. You do need someone who can troubleshoot basic problems without calling support constantly.

Designate one person to become your internal expert during implementation. They should complete every training module the vendor offers. They should understand the tool’s limitations and workarounds. This person becomes your first line of defense when issues arise.

A law firm bought document analysis software but kept only surface knowledge. Every small problem required a support ticket and delayed their work. They eventually hired a consultant to train their team properly. That upfront training investment paid back within weeks through reduced downtime.

Document your specific workflows and configurations as you build them. Vendors provide generic documentation. You need records of exactly how you’ve customized the tool for your business. When staff changes or problems emerge, this documentation becomes invaluable.

Internal expertise also helps you spot when you’ve outgrown a tool. You’ll recognize efficiency limits before they become serious bottlenecks. You can plan migrations strategically instead of reacting to crises.

How Do You Choose the Right AI Tools Through Staged Implementation?

Rolling out AI across your entire operation at once is a recipe for chaos. You need a staged approach that lets you learn and adjust. Start with one department or one specific use case.

Choose a pilot project where success is easy to measure and failure won’t cripple operations. A good pilot project has clear boundaries and defined outcomes. It should take weeks, not months, to show results.

An accounting firm wanted to automate data entry across all client accounts. They wisely started with just five small clients first. They discovered the tool struggled with handwritten receipts. They adjusted their process before expanding to larger clients with more complex needs.

Learn from your pilot before expanding. What took longer than expected? What surprised your team? What features do you actually use versus what you thought you’d need? These insights guide your next implementation phase.

Staged rollouts also give you escape options. If the tool isn’t working, you’ve only disrupted a small part of your business. You can pivot to a different solution without catastrophic consequences. How do you choose the right AI tools for your business instead of just following trends? You test incrementally and commit gradually.

Matching Tool Complexity to Actual Problem Scope

The most advanced tool is rarely the right answer. You need the simplest solution that solves your specific problem completely. Extra features create confusion and slow down adoption.

A small publishing company looked at enterprise content management systems with hundreds of features. They ultimately chose a simple writing assistant with basic formatting tools. It solved their actual problem at one-tenth the cost. The enterprise systems offered capabilities they would never touch.

Complexity increases training time and reduces team adoption. People avoid tools that feel overwhelming or unnecessarily complicated. Simple tools with focused features get used daily. Powerful tools with steep learning curves gather dust.

Evaluate each major feature the vendor highlights in their pitch. Will you actually use this feature weekly? Does it address a problem you currently have? If you can’t answer yes to both questions, that feature is noise.

Worth knowing.

Some problems genuinely need sophisticated solutions. Processing thousands of support tickets requires more power than handling fifty monthly inquiries. Match the tool’s capacity to your actual volume. Don’t pay for scale you won’t reach for years.

Start your AI tool selection by documenting one specific problem you need solved today.

Frequently Asked Questions

How long should I test an AI tool before committing?

Test for at least two full work cycles with real projects. Two weeks minimum for most business tools. Include the people who will use it daily. Measure specific outcomes like time saved or errors reduced. Surface impressions from quick demos don’t reveal actual value or problems.

What if my competitors are using AI tools I don’t have?

Your competitors might be wasting money on tools they don’t need. Focus on your specific operations and pain points. Different businesses need different solutions even in the same industry. Copying competitor choices without understanding your own needs leads to poor investments.

Should I choose specialized AI tools or all-in-one platforms?

Choose specialized tools that excel at solving your specific problems. All-in-one platforms often do many things poorly instead of one thing well. Specialized tools integrate with other software you already use. Simplicity beats comprehensiveness when functionality actually matches your needs.

How do I know if my team is ready for AI tools?

Your team is ready when you have clean data and clear processes. AI tools amplify existing workflows. They don’t fix broken operations or messy information. Start by organizing your current systems. Then add AI tools to improve specific steps in functioning processes.

What’s the biggest mistake businesses make when choosing AI tools?

They choose based on impressive demos instead of real testing with actual work. Vendors show perfect scenarios that don’t match daily reality. Always test tools with your own data and your own workflows. Measure results against specific goals you set before starting the trial.